"Social Media Algorithms' Negative Impact in 2026"

Social media has woven itself into the fabric of daily life, promising connection, information, and entertainment. Yet beneath the surface of endless scrolls and personalized feeds lies a powerful force shaping our behaviors, emotions, and even societal structures: social media algorithms.

In 2026, these sophisticated AI-driven systems have grown more refined and pervasive than ever. While they aim to deliver engaging content, their core design—to maximize user time and attention—often comes at a significant personal and collective cost. This article examines the negative impact of social media algorithms, drawing on emerging research, real-world observations, and platform developments.

From fueling addiction and harming mental health to amplifying division and distorting reality, the downsides deserve careful consideration. Understanding how social media algorithms operate is the first step toward mitigating their influence.

How Social Media Algorithms Work in 2026

At their foundation, social media algorithms are complex recommendation engines powered by machine learning and vast amounts of user data. They don't simply show posts chronologically. Instead, they predict what will keep each individual scrolling longer.

The process typically involves several stages:

1. Content Collection: Platforms gather eligible posts from accounts you follow, plus a wider pool based on similar interests.

2. Relevance Scoring: AI analyzes thousands of signals—watch time, completion rate, likes, comments, shares, saves, and even subtle behaviors like pause duration or re-watches.

3. Personalization: Using embedding models, the algorithm matches content to your inferred interests, past interactions, and predicted preferences.

4. Distribution Testing: Content starts with a small audience. Strong early engagement expands its reach exponentially.

In 2026, platforms like TikTok, Instagram, YouTube, and Facebook have shifted heavily toward interest-graph recommendations over simple follow graphs. Your followers matter less than whether the content matches your behavioral profile. Watch time and meaningful interactions, such as DM shares, now outweigh raw like counts on many platforms.

This precision makes feeds incredibly sticky. TikTok's For You Page, for instance, learns rapidly from minimal input, serving hyper-relevant videos that can hold attention for hours. Instagram prioritizes Reels with strong retention curves, while YouTube recommendations blend long-form and Shorts based on predicted satisfaction.

The result? Feeds that feel tailor-made but are engineered for engagement above all else. This design philosophy drives many of the negative impacts of social media algorithms.

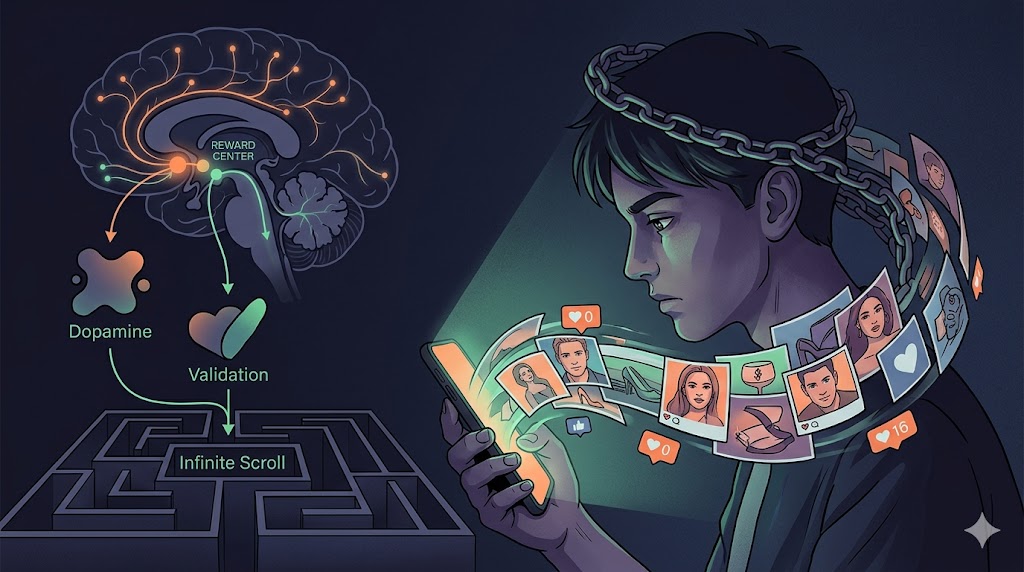

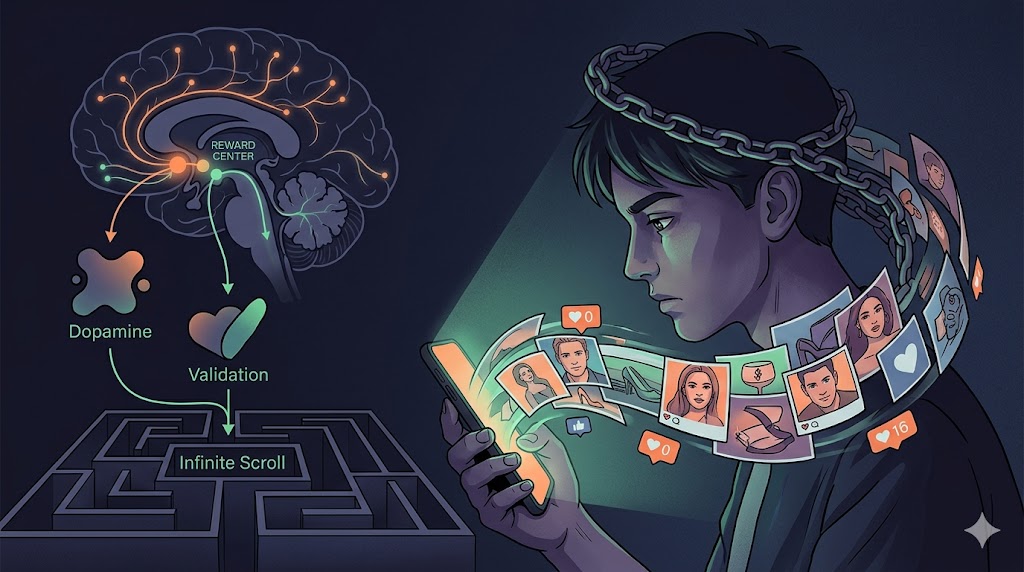

One of the most documented dangers is how social media algorithms exploit the brain's reward system. Infinite scroll, variable rewards (that are unpredictable like or comment), autoplay, and push notifications create compulsive usage patterns similar to slot machines.

Dopamine hits from engaging content reinforce the behavior, making it harder to stop. In 2026, studies continue to link heavy use—often driven by algorithmic feeds—to addiction-like symptoms, especially among adolescents. Platforms track every micro-interaction to refine what keeps users glued, sometimes prioritizing "the young ones" for their higher engagement potential.

TikTok frequently tops lists as the most addictive platform due to its advanced algorithm that quickly identifies and amplifies content matching a user's evolving tastes—even if those tastes lean toward harmful material. Users can slip into rabbit holes of negative content before realizing hours have passed.

Research shows that spending more than three hours daily on these platforms correlates with doubled risk of poor mental health outcomes. The algorithm doesn't just respond to your preferences; it actively shapes them by serving more of what elicits strong emotional reactions, positive or negative.

This creates a feedback loop where social media algorithms addiction becomes self-perpetuating, reducing real-world productivity, sleep quality, and face-to-face interactions.

The Dopamine Loop and Mental Health

Mental Health Toll: Anxiety, Depression, and Self-Worth Erosion

The negative impact of social media algorithms on mental health stands out as particularly concerning in 2026. Algorithm-driven feeds excel at surfacing emotionally charged or idealized content, triggering frequent upward social comparison.

Seeing curated highlight reels of others' lives—perfect bodies, luxurious vacations, career successes—can erode self-esteem and fuel feelings of inadequacy. This effect hits younger users hardest, whose developing brains are more susceptible to validation-seeking through likes and comments.

Global happiness reports note that apps heavy on algorithmic scrolling, such as Instagram and TikTok, correlate with lower life satisfaction compared to connection-focused platforms like WhatsApp. Doomscrolling, where algorithms push sensational or negative news, reinforces anxiety and sadness.

Experimental studies on reducing or abstaining from social media show improvements in mood, sleep, and overall well-being, strengthening evidence of causation rather than mere correlation. Jury verdicts in 2026 have even held certain platforms accountable for designing addictive features that contributed to depression and anxiety in young users.

For many, the constant pressure of maintaining an online persona, combined with algorithmic amplification of comparison triggers, creates a toxic environment that algorithms are optimized to sustain—not because they intend harm, but because engagement equals revenue.

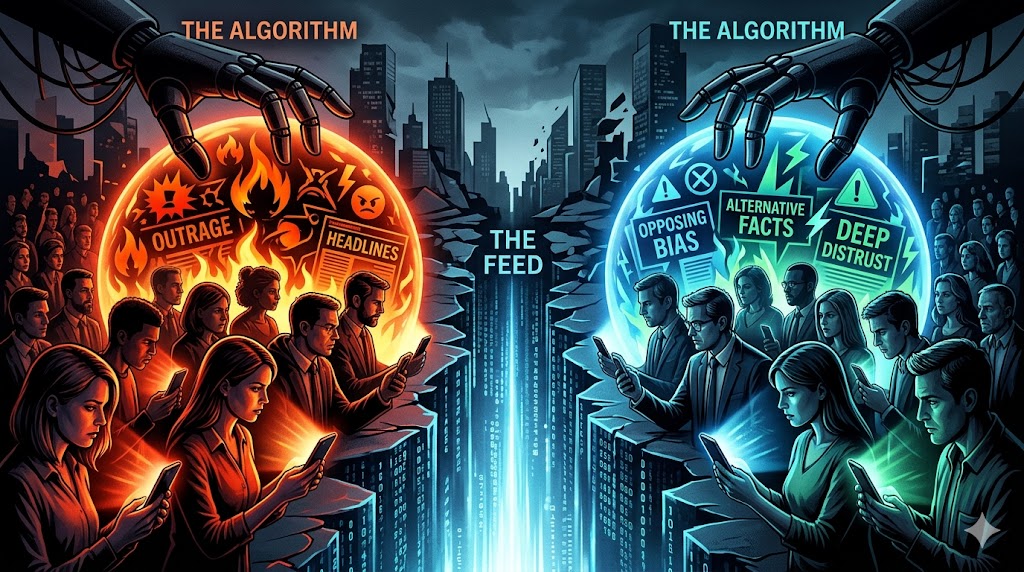

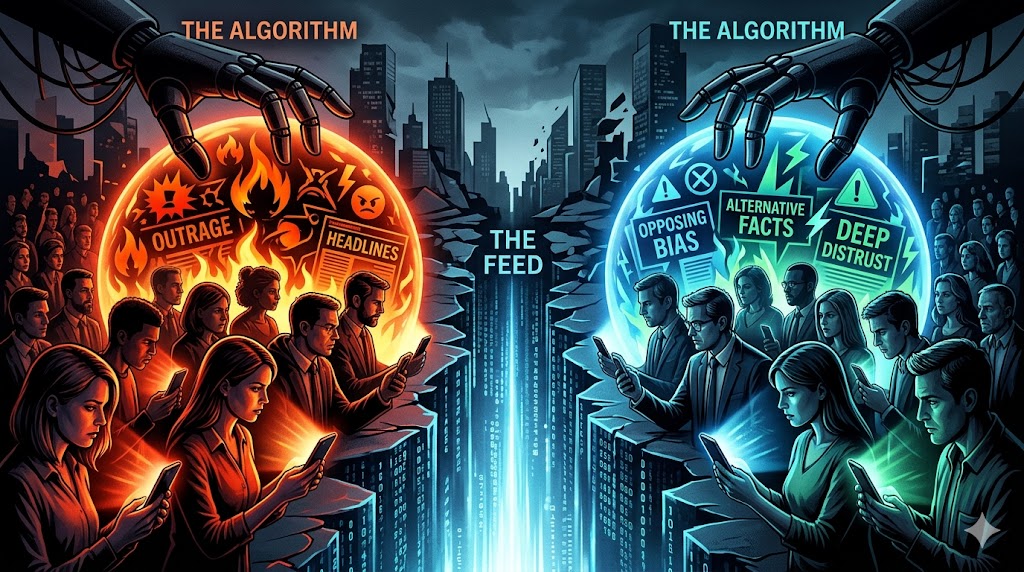

Polarization and Echo Chambers: Dividing Societies

Beyond individual harm, social media algorithms influence collective discourse. By prioritizing content that generates strong reactions, they often amplify extreme or emotionally provocative material. Outrage travels faster and farther than nuance.

Filter bubbles and echo chambers emerge as algorithms show users more of what aligns with their existing views, reducing exposure to diverse perspectives. On some platforms, algorithmic tweaks have demonstrably shifted political opinions by promoting certain types of content while demoting traditional media sources.

This dynamic contributes to increased societal polarization. Disinformation spreads rapidly when algorithms favor virality over accuracy, with AI-generated content adding new layers of complexity in 2026. Cognitive manipulation through synthetic media threatens democratic processes by distorting shared realities.

The pursuit of engagement can turn platforms into arenas where division thrives, as calm, balanced discussions rarely match the retention metrics of heated debates.

Distorted Reality and Reduced Critical Thinking

Prolonged exposure to algorithmically curated feeds can warp perceptions of the world. When most content is selected for maximum emotional impact, users encounter a skewed version of reality—one filled with extremes rather than everyday nuance.

This affects memory formation, contextual thinking, and analytical skills. Some experts warn of "algorithmic burnout" and AI overwhelm as users struggle to process the constant stream of optimized stimuli.

Young people, in particular, may develop unrealistic expectations about relationships, success, and appearance. The algorithm's tendency to push trending or sensational topics can also crowd out deeper learning or creative pursuits.

Furthermore, over-reliance on personalized feeds reduces serendipity—the chance encounters with unexpected ideas that foster growth and innovation.

Platform-Specific Concerns in 2026

Different platforms manifest these issues uniquely:

TikTok and Instagram Reels: Hyper-optimized for short-form video, they excel at rapid addiction formation through seamless, endless recommendations.

YouTube: Its recommendation engine can lead users from innocuous videos to more extreme content, with reports of "regrettable" suggestions persisting.

Facebook and X: Feeds blending social connections with algorithmic predictions can intensify echo chambers around news and politics.

Emerging AI Features: Generative tools and deeper personalization raise fresh concerns about authenticity and manipulation.

Across the board, the business model remains tied to attention metrics, creating inherent tension with user well-being.

Regulatory Responses and Corporate Responsibility

By 2026, scrutiny has intensified. Lawsuits, age-appropriate design regulations, and calls for greater transparency highlight the need for platforms to balance profit with protection—especially for minors.

Some platforms have introduced tools like topic management, "Not Interested" options, and usage reminders. However, critics argue these are insufficient without fundamental changes to engagement-driven algorithms.

Experts advocate for designs prioritizing meaningful connection over compulsive scrolling, along with independent audits of algorithmic impacts.

Mitigating the Negative Impact: Practical Strategies

While completely avoiding social media may not be realistic for everyone, individuals can take steps to reduce harm:

Set Boundaries: Use built-in screen time limits and schedule specific usage windows.

Curate Intentionally: Actively use "Not Interested," mute, or block features to train the algorithm toward healthier content.

Prioritize Connection: Favor direct messaging or smaller groups over passive feed scrolling.

Diversify Inputs: Balance online time with offline activities, reading, and in-person interactions.

Mindful Consumption: Question emotional reactions and seek diverse sources beyond algorithmic suggestions.

Digital Detoxes: Periodic breaks can reset habits and improve mental clarity.

Parents and educators play vital roles in teaching media literacy and modeling healthy habits.

For creators and marketers, focusing on authentic, value-driven content rather than pure virality offers a more sustainable path, even if it means slower initial growth.

Looking Ahead: Can Algorithms Serve Us Better?

The negative impact of social media algorithms in 2026 underscores a fundamental mismatch: systems optimized for corporate metrics rather than human flourishing. As AI capabilities advance, the potential for both greater harm and more empathetic design grows.

Some envision a future with user-controlled algorithms, transparency requirements, or incentives aligned with well-being. Others call for broader cultural shifts toward intentional technology use.

Ultimately, awareness is key. By understanding how social media algorithms work and their documented downsides, individuals and society can make more informed choices.

Society and Polarization

Conclusion: Reclaiming Agency in an Algorithmic Age

Social media algorithms have transformed how we discover information, connect with others, and spend our time. Their negative effects—addiction, mental health challenges, polarization, and distorted worldviews—represent some of the most pressing digital issues of our era.

In 2026, as these systems become even more sophisticated, the responsibility falls on platforms, regulators, and users alike. Platforms must move beyond engagement-at-all-costs. Regulators need to enforce meaningful safeguards. And as individuals, we must cultivate awareness and discipline in our digital lives.

Social media isn't inherently evil, but its current algorithmic foundations often prioritize profit over people. By acknowledging the dangers of social media algorithms and actively managing our exposure, we can harness the benefits while minimizing the harms.

The power to shape your online experience ultimately rests with you. Question what the algorithm serves. Choose content and platforms that add real value. And remember that the most meaningful connections and insights often exist beyond the feed.